P99 latency (99th percentile latency) is the response time at which 99% of requests are faster and only 1% are slower. It is used to understand the "tail" of the latency distribution — the worst-case experience that a meaningful number of users encounter.

Percentile metrics are preferred over averages for latency measurement because averages can be misleading. A service with an average latency of 100ms might have a P99 of 2,000ms, meaning 1 in 100 users experiences a 2-second delay. Common percentiles used are P50 (median, typical experience), P95 (5% slowest), P99 (1% slowest), and P999 (0.1% slowest).

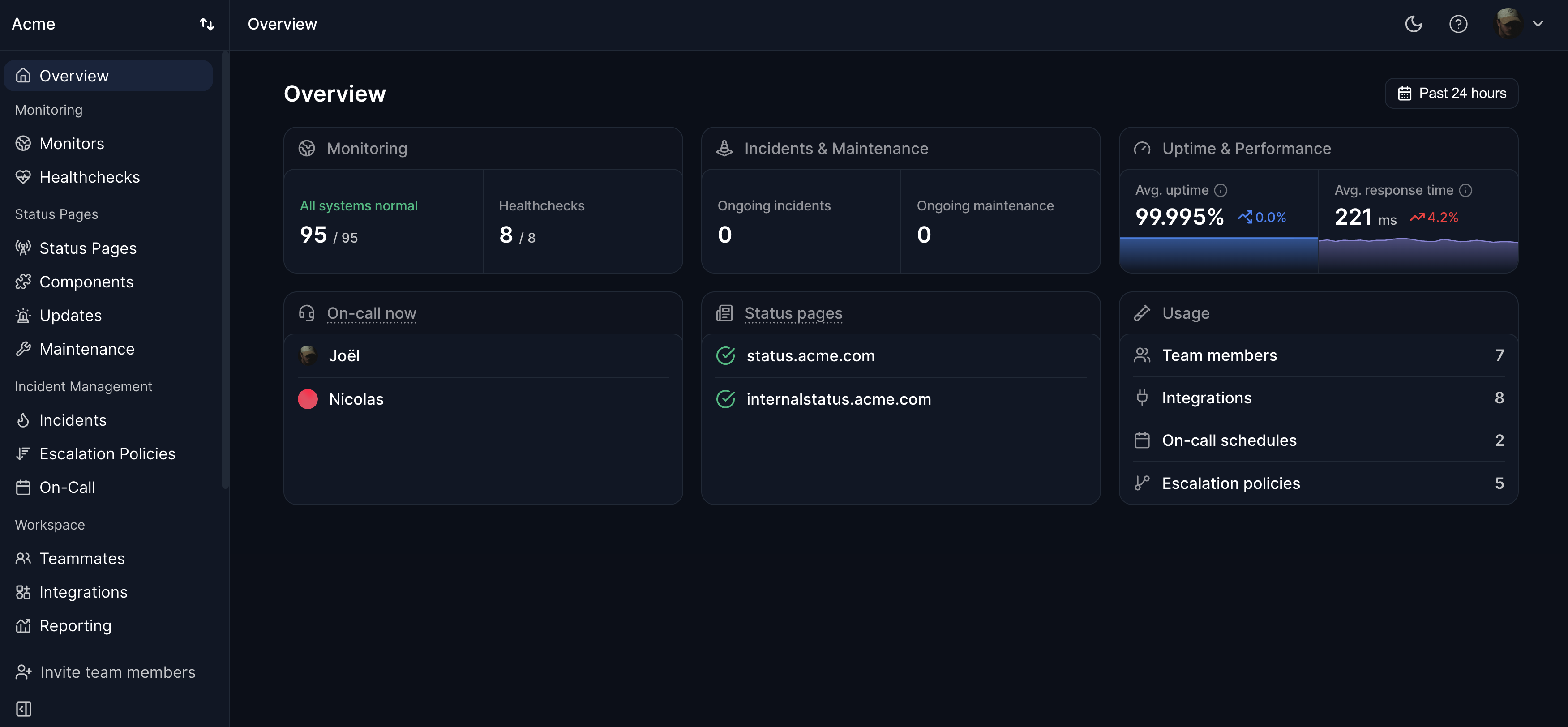

High P99 latency often indicates resource contention, garbage collection pauses, slow database queries affecting a subset of requests, or cold starts in auto-scaling systems. Fixing P99 issues often requires different approaches than fixing P50 issues — such as connection pooling, query optimization, or cache warming. Response time monitoring with Hyperping tracks how your service performs for real users from multiple global locations.