Latency is the time delay between initiating an action (such as sending an HTTP request) and receiving the result (the response). In web services, latency is typically measured in milliseconds and is one of the most important indicators of user experience and service health.

Latency is usually analyzed at percentiles rather than averages, because averages can hide outliers. P50 (median) latency shows the typical experience, P95 shows the experience for most users, and P99 captures the tail latency that affects 1 in 100 requests. A service with a low average but a high P99 may still deliver a poor experience for a significant number of users.

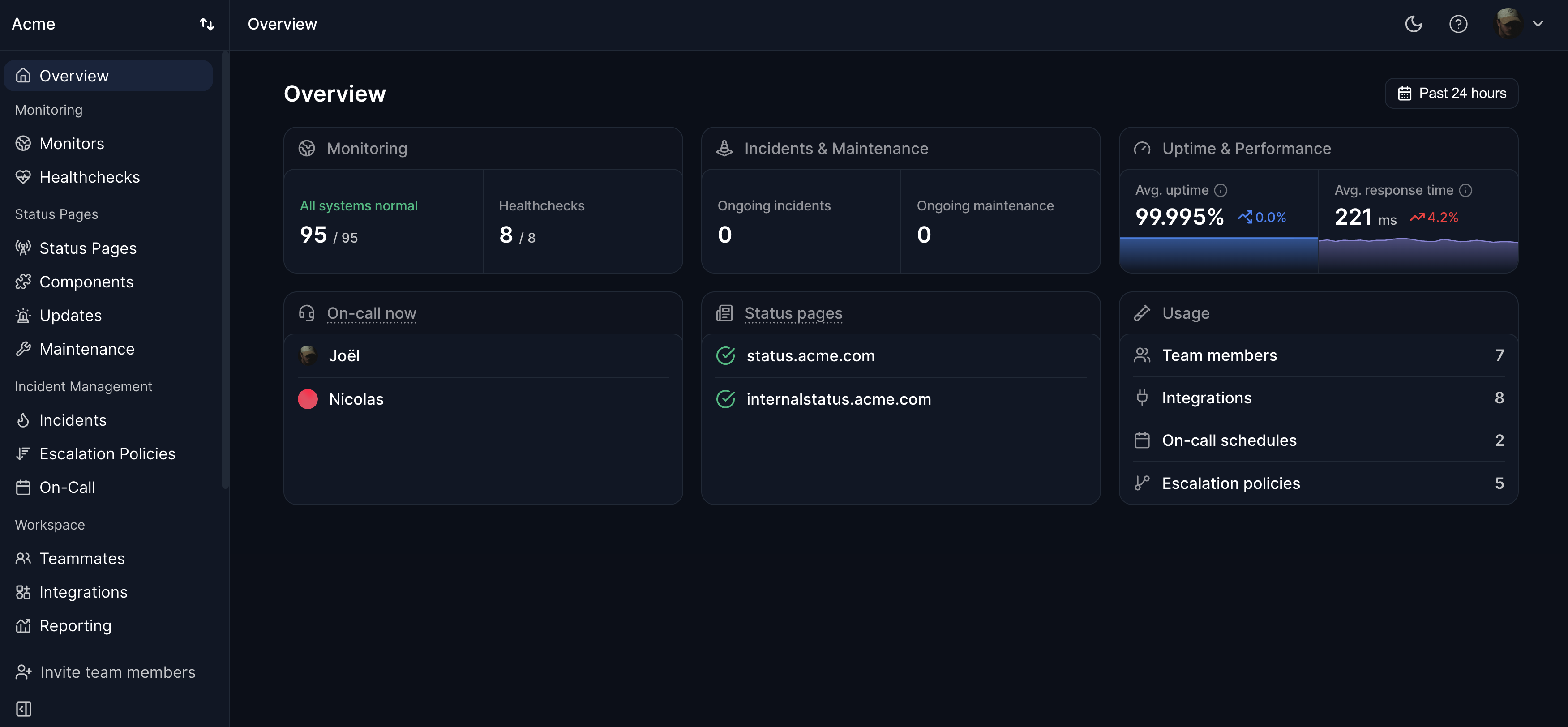

Common causes of high latency include network congestion, slow database queries, inefficient code, cold starts in serverless environments, and geographic distance between the user and the server. Monitoring response time from multiple regions — as Hyperping does — helps identify latency issues and track performance over time.