Throughput measures the volume of work a system can handle in a given time period, most commonly expressed as requests per second (RPS) or transactions per second (TPS) for web services. It is a key capacity metric that indicates whether a system can handle its current and expected load.

Throughput and latency are related but distinct metrics. A system can have high throughput (processing many requests) but also high latency (each request takes a long time). Conversely, a system can have low latency but low throughput if it can only handle a few concurrent requests. Ideally, you want high throughput with low latency.

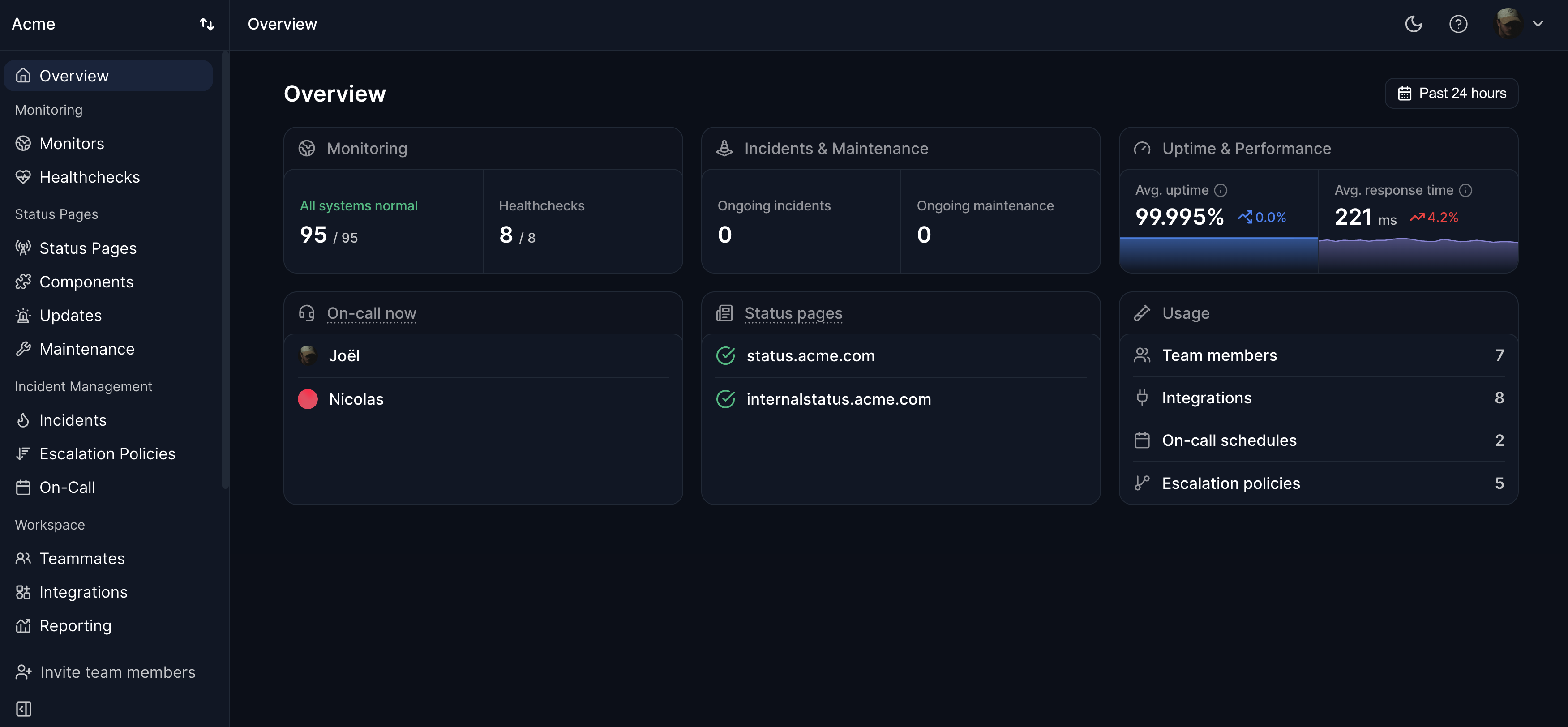

Monitoring throughput helps teams plan capacity, detect traffic anomalies, and identify bottlenecks. A sudden drop in throughput might indicate a failing backend service, while a sudden spike might indicate a traffic surge or a DDoS attack. Uptime monitoring tools like Hyperping focus on availability and response time from the user perspective, complementing internal throughput monitoring.