Load balancing is the practice of distributing incoming requests across a pool of backend servers to optimize resource utilization, maximize throughput, minimize response time, and avoid overloading any single server. Load balancers act as a reverse proxy, sitting between clients and servers.

Common load balancing algorithms include round-robin (distributing requests evenly in rotation), least connections (sending to the server with fewest active connections), weighted distribution (sending more traffic to more powerful servers), and IP hash (routing the same client to the same server for session affinity).

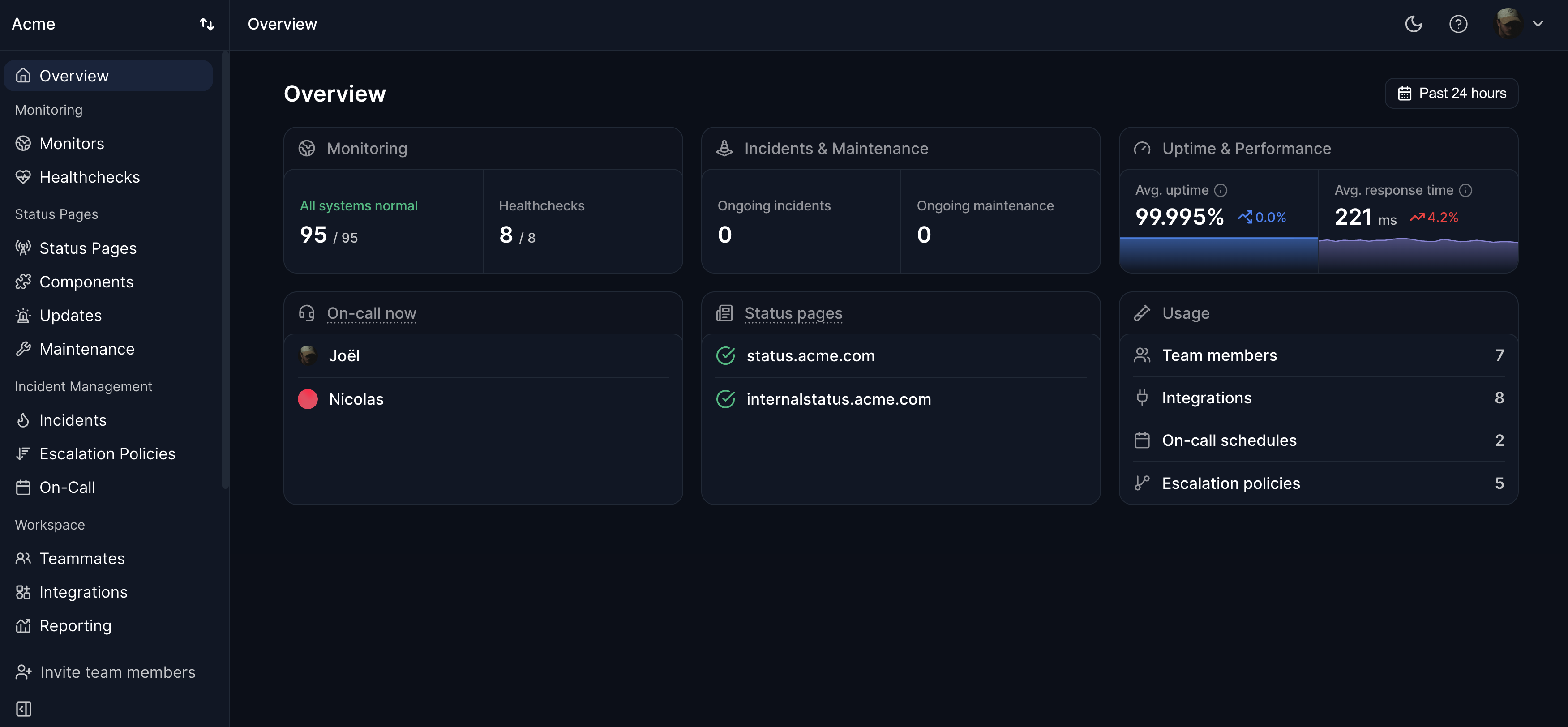

Load balancers also perform health checks on backend servers and automatically remove unhealthy servers from the pool. This is a form of automatic failover at the application layer. Modern cloud platforms provide managed load balancing services (AWS ALB/NLB, GCP Load Balancing, Cloudflare). Monitoring the load balancer endpoint with Hyperping ensures you detect issues even when individual backend servers might still be healthy.