Most companies run post-mortems like autopsies. They dissect the corpse, assign blame, and file it away. The body count keeps rising.

Here's what actually works: post-mortems as learning machines. Systems thinking over finger-pointing. Patterns over pain.

What you'll get: A copy-paste template, real metrics that matter, and the mindset shift that turns outages into intelligence.

Who this is for: SRE leads tired of repeating incidents. Engineering managers who want learning over theater. Anyone who's sat through a post-mortem that felt like a trial.

TL;DR:

- Post-mortems as learning machines, not blame sessions

- Copy-paste template included

- Focus on systems thinking and pattern recognition

- Metrics that actually improve reliability

- Compliance requirements (ISO 27001, NIST, SOC 2)

What is an incident post-mortem?

A structured, written analysis after something breaks. You document what happened using proper incident communication templates, the impact, contributing factors, and follow-ups to reduce recurrence.

Different teams call it different things:

| Term | Focus | When Used | Industry |

|---|---|---|---|

| Post-mortem | Overall learning | After any incident | Most common, tech |

| Root cause analysis (RCA) | Finding single cause | Major failures | Traditional ops |

| After-action review (AAR) | Military-style debrief | Complex operations | Defense, healthcare |

| Incident review | Neutral terminology | Customer-facing issues | SaaS, enterprise |

| Learning review | Growth mindset | Near-misses included | Safety-critical systems |

The differences matter less than the core function: extracting maximum learning from minimum pain.

Why "blameless" isn't just nice-to-have philosophy. When people feel safe, they tell you what really happened. When they're scared, they give you the sanitized version. Sanitized versions don't prevent repeat incidents. This is fundamental to modern incident management practices.

The feedback loop: Incident → Post-mortem → Action Items → Trend Analysis → Fewer future incidents. Most teams nail the first step and fumble everything after.

When should you run one?

| Incident Type | Severity | Run PM? | Priority |

|---|---|---|---|

| Customer-facing outage | Sev-1 | Always | High |

| Internal service degradation | Sev-2 | Always | High |

| False alarm that paged team | Any | Yes | Medium |

| Near-miss with systemic risk | Any | Yes | Medium |

| Chronic minor issues | Sev-3+ | When pattern emerges | Low |

| Third-party failure | Any | If exposed gaps | Medium |

| Every minor blip | Sev-4 | No | None |

Determine your severity thresholds using your SLA calculator and uptime calculator. Track cumulative impact with the downtime calculator to identify when minor issues warrant review.

Compliance note: If you're in a regulated industry, you're probably already required to do this. ISO 27001:2022 Annex A 5.27 mandates learning from security incidents. NIST SP 800-61r3 emphasizes post-incident learning within risk management frameworks (NIST Publications).

Don't over-rotate on recency. Let lower-value action items "soak" before committing resources. The goal is signal, not documentation theater.

Roles and ownership

The Incident Commander from your on-call rotation picks a post-mortem owner quickly. This isn't punishment, it's assignment. Usually the person with the clearest timeline memory or the deepest system knowledge. See our on-call documentation for rotation best practices.

Cross-functional contributors:

- Incident responders

- Service owners

- Customer communications

- Support team

- Leadership (for context, not control)

| Task | IC | PM Owner | Contributors | Leadership |

|---|---|---|---|---|

| Select owner | Responsible | Informed | - | Consulted |

| Write draft | Consulted | Responsible | Accountable | Informed |

| Facilitate meeting | Informed | Responsible | Consulted | Informed |

| Assign action items | Accountable | Responsible | Consulted | Informed |

| Track completion | Informed | Accountable | Responsible | Consulted |

Good facilitator profiles: Strong writers who can synthesize. Individual contributors with system perspective. Neutral parties who can ask dumb questions without ego.

Facilitation skill matters more than seniority. The best post-mortems come from people who can extract truth without making it feel like interrogation.

Blameless doesn't mean no accountability

"Tough on content, soft on people."

Language rules:

✅ "The deployment process allowed production changes without review" ❌ "John deployed without getting review"

✅ "Alert fatigue contributed to delayed response" ❌ "The on-call engineer ignored alerts"

✅ "Documentation gaps led to configuration error" ❌ "Someone should have known the right settings"

Key definition: Just Culture "An atmosphere of trust where people are encouraged to provide safety-related information, but clear lines are drawn between acceptable and unacceptable behavior" (Sidney Dekker)

Just Culture basics: You want psychological safety that drives improvement. This means rewarding candor publicly, tracking action items religiously, and making sure leaders don't accidentally punish honesty.

What accountability looks like: Following through on action items. Changing systems based on what you learned. Making the same mistake harder to repeat.

Leader behaviors that reinforce trust:

- Publicly reward people who surface problems

- Track and close action items visibly

- Never use post-mortem details in performance reviews

- Share your own mistakes during reviews

Ditch "single root cause"

TL;DR:

- Complex systems have multiple contributing factors

- Focus on networks of conditions, not single points

- Learn from mitigators (what worked) not just failures

- Five whys doesn't work for non-linear problems

Complex systems don't have single points of failure. They have networks of contributing conditions, mitigators that limited blast radius, and systemic risks waiting to bite you. This understanding is crucial for improving MTTR through better analysis.

"Five whys" works for linear problems. Software systems aren't linear (Allspaw on Kitchen Soap).

| Method | Best For | Limitations | Example |

|---|---|---|---|

| Five Whys | Simple linear failures | Assumes single cause path | Machine restart fixes issue |

| Contributors/Mitigators | Complex system failures | Requires deep system knowledge | Multi-factor outages |

| Causal Analysis | Understanding relationships | Time-intensive | Cascading failures |

| STAMP | Safety-critical systems | Steep learning curve | Aviation, healthcare |

Better framework:

Contributors (what increased likelihood):

- Technical: Race condition in caching layer

- Human: Deploy during peak traffic

- External: AWS region degradation

Mitigators (what limited damage):

- Circuit breakers failed open gracefully

- On-call engineer noticed within 3 minutes

- Customer-facing load balancer rerouted traffic

Risks (what could make this worse):

- Key-person dependency on legacy system

- Single region deployment

- No automated rollback for this service type

Learn from what worked, not just what failed. Your mitigators are often more valuable than your root causes (Safety-II principles).

The post-mortem template

Skip the copy-paste: Use the free post-mortem template generator to fill in the fields and export straight to Markdown, Google Docs, Notion, or Confluence.

# Incident Post-mortem: [Brief Description]

## Key Facts

- **Severity:** [Sev-1/2/3]

- **Impact start:** [UTC timestamp]

- **Impact end:** [UTC timestamp]

- **Affected services:** [List]

- **On-call rotation:** [Primary/Secondary responders]

- **Customer impact:** [Minutes of downtime, users affected, revenue impact]

- **Status page:** [Link to updates]

## Executive Summary

[Write this last. 2-3 sentences for non-technical stakeholders: what broke, how bad it was, what you fixed, what you're doing to prevent recurrence.]

## Timeline

[Auditable and narrative. Include decision points, not just events.]

**2024-01-15**

- **14:23 UTC:** First alerts fire for elevated 5xx errors

- **14:25 UTC:** On-call engineer @jane investigates, sees database connection timeouts

- **14:28 UTC:** Incident declared, page sent to database team

- **14:30 UTC:** Database team confirms connection pool exhaustion

- **14:35 UTC:** Decision made to restart application servers rather than scale DB

- **14:42 UTC:** Traffic restored, monitoring for stability

- **14:50 UTC:** All-clear declared

[Link to incident channel, relevant PRs, dashboard snapshots]

## Contributors, Mitigators, Risks

### Contributors

- Database connection pool size hadn't been updated after recent traffic growth

- Deploy timing coincided with lunch-hour traffic spike

- No automated scaling for connection pools

### Mitigators

- Circuit breakers prevented complete service death

- Load balancer health checks failed fast

- On-call engineer had recently debugged similar connection issues

### Risks

- Manual connection pool tuning across 12 services

- No load testing that simulates real traffic patterns

- Single database instance for this service cluster

## Diagnostics and Evidence

[Screenshots of dashboards, log snippets, traces. Note what you couldn't determine.]

- Database CPU spiked to 95% at 14:23

- Connection pool metrics showed 0 available connections

- Application logs: "Connection timeout after 30s"

- **Unknown:** Why connection pool didn't auto-scale as configured

## Learnings

**Technical:**

- Connection pool auto-scaling was disabled in production config

- Our load testing doesn't account for connection overhead

**Coordination:**

- Database team response time was excellent

- Status page updates were delayed by 8 minutes

**Product:**

- Customer-facing error pages provided no useful information

- Mobile app handled the outage more gracefully than web

## Follow-ups

[SMART actions with owners and due dates]

- [ ] **@database-team:** Enable connection pool auto-scaling in prod **[Jan 22]**

- [ ] **@sre-team:** Add connection pool utilization to standard dashboards **[Jan 25]**

- [ ] **@qa-team:** Update load testing to include connection pool stress **[Feb 1]**

- [ ] **@comms-team:** Reduce status page update SLA from 15min to 5min **[Jan 30]**

**Theme tags:** [capacity, configuration, monitoring, communications]

## Appendix

- Architecture diagram showing connection flow

- Before/after configuration diffs

- Customer communication timelineCalculate the business impact and revenue loss from customer minutes impacted. Link to your status page and use incident communication templates for consistency.

How to run the incident review meeting

Keep it tight. 45 minutes maximum.

Structure:

- Pre-meeting prep: Send draft document, assign note-taker

- Summary walkthrough (5 min): Post-mortem owner presents executive summary

- Timeline review (20 min): Step through events, annotate live with questions

- Analysis discussion (15 min): Contributors, mitigators, risks discussion

- Action assignment (5 min): Log follow-ups with owners and dates

Meeting hygiene:

- Record for people who can't attend

- Direct questions to specific people, not "the room"

- Let improvements surface naturally, don't force brainstorming

- Assign owners before the meeting ends

Tight attendance. Invite responders, service owners, and stakeholders who need context. Everyone else can read the doc.

Observability data you'll actually need

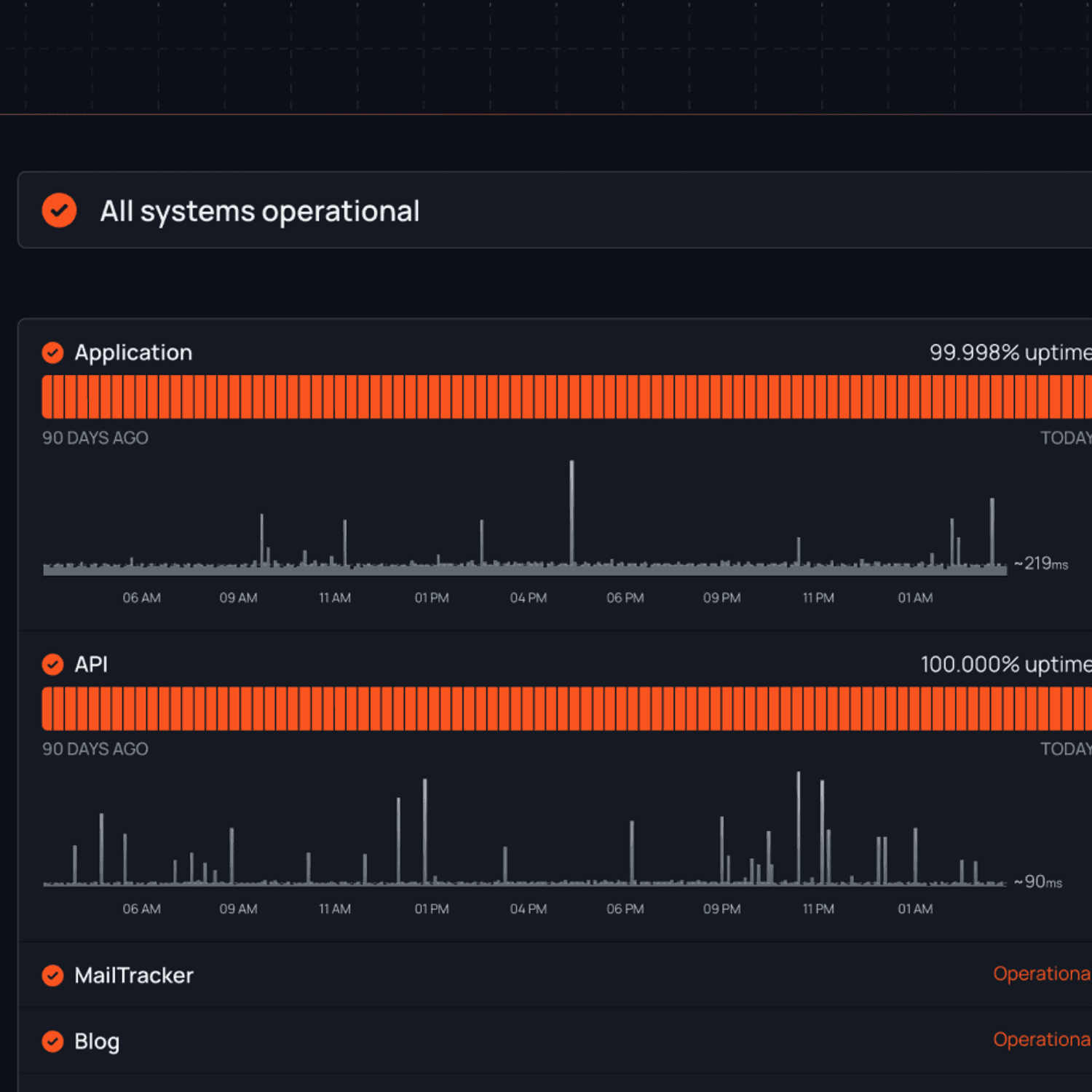

Set up proper uptime monitoring and synthetic monitoring to capture the right data automatically.

| Data Type | Collection Method | When Needed | Storage |

|---|---|---|---|

| Alerts fired | Monitoring system API | Immediately | Time-series DB |

| Deployment events | CI/CD webhooks | Timeline correlation | Event store |

| Infrastructure changes | Config management | Root cause analysis | Audit logs |

| Trace data | APM tools | Request flow analysis | Trace storage |

| Load balancer metrics | Cloud provider APIs | Traffic patterns | Metrics platform |

| Customer impact | Status page analytics | Impact assessment | Analytics DB |

| Log queries | Centralized logging | Debugging | Log retention |

| Dashboard snapshots | Monitoring screenshots | Evidence | Object storage |

Real-time context:

- Dashboard snapshots at key timeline moments

- Log queries that helped diagnose the issue

- Performance metrics before, during, and after

Historical context:

- Similar incidents from the past 6 months

- Recent changes to affected systems

- Traffic patterns and seasonal trends

Embed graphs and logs directly in the post-mortem document. Screenshots decay, but linked dashboards stay current.

Program-level metrics

Track your post-mortem program alongside SLA/SLO tracking, not just individual incidents.

Post-Mortem KPIs Dashboard

| Metric | Target | Current | Trend |

|---|---|---|---|

| MTTR | < 1 hour | 1.5 hours | ↓ |

| Time-to-draft | 48 hours | 72 hours | → |

| Time-to-publish | 7 days | 10 days | ↑ |

| Action item closure | 90% | 75% | ↓ |

| 90-day recurrence | < 5% | 8% | ↑ |

Industry Benchmarks

- Elite performers: MTTR < 1 hour

- High performers: MTTR < 1 day

- Average: MTTR < 1 week

Source: DORA State of DevOps 2021

Reliability outcomes:

- DORA metrics: Deployment frequency, lead time, change failure rate, MTTR

- Psychological safety correlation: Teams that feel safe reporting problems have better reliability metrics

Impact of Psychological Safety "Teams with high psychological safety are 47% more likely to engage in process improvements and 64% more likely to report near-misses" — DORA 2021

Trend analysis:

Tag incidents by trigger type:

- Configuration errors

- Deployment issues

- Capacity problems

- Third-party failures

- Risky migrations

Invest improvement effort where patterns cluster. If 40% of your Sev-1s are config-related, that's your highest-leverage fix. Monitor how this affects your 99.99% uptime targets or 99.999% uptime targets.

Public vs private post-mortems

Determine what to share based on your status page strategy and internal status page needs.

| Information Type | Public | Private | Notes |

|---|---|---|---|

| Impact timeline | Yes | Yes | Customer-visible events only for public |

| Technical root cause | Summary | Detailed | Avoid exposing attack vectors |

| Individual names | No | No | Use roles instead |

| System architecture | High-level | Detailed | Security through obscurity isn't security |

| Remediation steps | Yes | Yes | Shows commitment to improvement |

| Action items | Major items | All items | Public gets confidence-building items |

Public post-mortems (customer-facing):

- Timeline of customer impact

- What you fixed

- What you're doing to prevent recurrence

- Apology that acknowledges real impact

Keep private:

- Internal system details

- Individual names and decisions

- Competitive information

- Security vectors that could be exploited

Example done well: Cloudflare's June 2025 outage post-mortem. Clear timeline, specific technical details, concrete follow-ups. No fluff, no blame. See more status page examples from industry leaders.

Legal review for public posts if you're in a regulated industry. But don't let legal review kill transparency entirely. Learn why you need a status page for customer trust.

Compliance and security incidents

TL;DR: ISO 27001 requires documented learning (A.5.27), preserve evidence for forensics, consider legal privilege, automate timeline calculations

Follow your organization's security and compliance practices for incident handling.

| Framework | Requirement | PM Component | Evidence |

|---|---|---|---|

| ISO 27001 | A.5.24: Planning and preparation | Documented process | PM template and process doc |

| ISO 27001 | A.5.27: Learning from incidents | Post-incident analysis | Completed PMs with actions |

| SOC 2 | CC7.*: System operations | Incident response process | PM records and metrics |

| NIST | SP 800-61r3: Post-incident activity | Learning and improvement | Trend analysis reports |

| GDPR | Article 33: Breach notification | 72-hour timeline | Automated timeline tracking |

Regulatory Timeline

- GDPR breach notification: 72 hours

- SOC 2 incident response: Documented process required

- ISO 27001: Annual review minimum

ISO 27001 mapping:

- Control A.5.24: Incident management planning and preparation (ISMS.online)

- Control A.5.27: Learning from information security incidents (Hightable)

SOC 2 expectations: CC7.* controls around system operations require documented incident response and learning processes (HiComply).

Security-specific considerations:

- Evidence preservation (don't clean up until forensics is complete)

- Chain of custody for investigation artifacts

- Breach notification timeline calculations

- Legal privilege considerations for internal communications

NIST SP 800-61r3 emphasizes post-incident learning loops within risk management (NIST Publications). Your post-mortems become inputs to risk assessments and control effectiveness reviews.

Tooling and automation checklist

Tool integration checklist

- [] Alert aggregation from monitoring systems

- [] Timeline generation from event streams

- [] Dashboard snapshot automation

- [] Ticket creation for action items

- [] Publishing workflow to documentation platform

- [] Follow-up tracking and aging reports

- [] Trend analysis dashboard updates

- [] Stakeholder notification workflows

Auto-assemble first draft:

- Pull timeline from monitoring alerts

- Attach deployment history and infrastructure changes

- Export dashboard snapshots automatically

- Populate metadata (severity, duration, impact)

Integration points:

- Slack incident management or Teams channels for capturing real-time decisions

- PagerDuty integration or Opsgenie integration for responder data

- Status page API for customer communication timeline

- Issue tracker integration for follow-up actions

- Confluence/Notion for final publishing

- Escalation policies to trigger reviews

The goal: reduce documentation overhead so teams actually do post-mortems consistently. Use the right incident management tools and escalation policies to automate the heavy lifting.

Advanced: Learning from success

Safety-II Principle "Things go right more often than they go wrong. Study why things usually work to make them work better when they don't." (PMC)

Traditional post-mortems focus on failure. Safety-II thinking asks: what went right during this incident?

Questions to add:

- What improvised mitigations worked better than expected?

- Which team members showed adaptive capacity under pressure?

- What informal communication channels proved essential?

- Which monitoring alerts were actually helpful vs noise?

Example: During the database connection pool incident, the on-call engineer's recent experience with similar issues let them diagnose quickly. That's not luck, that's adaptive capacity. How do you institutionalize that knowledge transfer?

Learn from resilience, not just brittleness. Your best mitigations often come from understanding what made the incident less bad than it could have been. Review how our customers build resilient incident response.

Real-world learning gallery

| Company | Year | Incident | Key Learning | Link |

|---|---|---|---|---|

| Slack | 2021 | Packet loss cascade | Network issues amplify into application failures; monitor network as leading indicator | Slack Engineering |

| GitLab | 2017 | Database deletion | Radical transparency builds trust; live-streaming recovery showed commitment | GitLab Blog |

| Cloudflare | 2025 | KV storage failure | Specific remediation with dates beats vague promises | Cloudflare Blog |

| Various | Multiple examples | Blameless culture drives better outcomes | Google SRE Book |

Common anti-patterns to avoid

| Anti-Pattern | Why It's Bad | Better Approach |

|---|---|---|

| "Be more careful" | Doesn't change system conditions | Design systems that prevent errors |

| "Add more tests" (vague) | No clear action or metric | "Add integration tests for X scenario" |

| "Do training" (generic) | Doesn't address specific gaps | "Document X process, train on Y tool" |

| "User error" | Blames human, ignores design | "System allowed invalid action" |

| "Should have known better" | Hindsight bias | "Documentation was unclear about X" |

| Over-fitting to last incident | Solves yesterday's problem | Look for systemic patterns |

| Rush to action items | Missing full context | Let analysis breathe before committing |

No soak time: Rushing into action items before understanding the full system context. Let analysis breathe before committing to solutions.

FAQs

What's the difference between post-mortem, RCA, and after-action review?

Post-mortem is the document. RCA is the analytical method. After-action review is the meeting format. Same goal: learning from incidents.

How fast should we publish?

Aim for 48-72 hour draft with timeline and basic analysis. Final version with all action items within 7-10 days. Speed matters less than thoroughness.

Do five whys still help?

Sometimes, for simple linear failures. For complex system interactions, prefer "how" questions and multi-causal analysis. Ask "what conditions contributed" instead of "why did this happen."

What metrics actually move reliability?

DORA metrics for delivery performance. Action item closure rates and recurrence trends for learning effectiveness. Psychological safety surveys for team health.

Key terms and definitions

| Term | Definition |

|---|---|

| Blameless culture | Focus on system improvement rather than individual fault |

| MTTR (Mean Time To Recovery) | Average time to restore service after an incident |

| Severity levels | Classification system for incident impact (Sev-1 highest) |

| Incident Commander | Person responsible for coordinating incident response |

| SLO/SLA/SLI | Service Level Objective/Agreement/Indicator for reliability targets |

| DORA metrics | DevOps Research metrics: deployment frequency, lead time, MTTR, change failure rate |

| Just Culture | Balance between accountability and psychological safety |

| Safety-II | Focus on why things usually go right, not just why they fail |

| Contributing factors | Conditions that increased likelihood of incident |

| Mitigators | Factors that limited incident impact |

Related reading

- Incident communication templates — templates for communicating during incidents

- MTTR guide — measure and reduce your mean time to resolution

- Escalation policies guide — build a framework for routing incidents

- Best incident management tools — compare incident response platforms

- DevOps alert management — reduce alert fatigue

- SLA vs SLO vs SLI — understand the reliability metrics referenced in post-mortems

- Incident management hub — all our incident response resources